Time for a post where I either talk about news that broke after I composed this week’s Friday Five or new developments in stories linked previously, or something I want to say about a story linked previously.

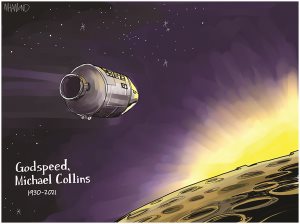

I posted two different stories about the death of Apollo 11 Command Module Pilot, Michael Collins, already. When Apollo 11 became the first human mission to land on the moon, I was an eight-year-old science and sci fi geek living in the central Rockies region of the U.S. and I was glued to every news cast about it. Yesterday I find this re-posted story on NPR that includes a 1988 interview with Collins which I found really interesting: ‘Fresh Air’ Remembers Apollo 11 Astronaut Michael Collins

Moving on…

You may have seen the video or pictures of this sweet moment that were being shared on social media Thursday and Friday: Joe Stops to Pick Flower for Jill Biden on Their Way to Ga. Rally and to Visit Jimmy and Rosalynn Carte – While en route to Georgia, the president shared a brief moment with his wife, stopping to pick her a dandelion before they boarded Marine One

While all of us normal humans saw a man plucking a flower from the lawn to hand to his wife, a gesture that men who are in love with their wives have been known to do spontaneously for centuries, the people at Fox and Newsmax saw something else. And while this headline uses the work ‘mock’ I think a better description is that they came unhinged at the sight: Fox & Newsmax Hosts Mock Joe Biden’s ‘Sweet’ Dandelion Moment with Jill — One Claims it Was ‘Planted’

One of the so-called pundits claimed that Joe had murdered the flower because he plucked it "before it had bloomed." And how does he know that it was before it had bloomed? Why, because it was in that downy stage where one can blow on it and send its seeds flying. In case you don’t know how flowers work (which clearly this guy doesn’t) the downy seed stage happens long after the flower blooms. The whole point of that downy seed stage is to spread the seeds that have been created by the flower blooming and getting pollinated.

But then the unhinged Fox host goes on to claim that blowing those seeds causes other people to get asthma. Um, no, again, that isn’t how asthma works nor is it the seeds that are even the issue. Many asthma sufferers have attacks triggered by high pollen count. That downy part of the dandelion is not pollen. Those are seeds. Very different things.

The latter charge is particularly eye-roll-inducing because just a few moments before the same producer and accused Joe of effectively committing dandelion abortion… but the flowers can’t reproduce without exchanging the very pollen that the pundit has mistaken the seeds for and which he says it is a crime to spread in the air.

Ooooo, boy!

Speaking of unhinged people…

Kansas Lawmaker Arrested For Assaulting Student After Long Day Of Yelling At Teens About God This is just a wild and terrifying story. The assualt, by the way, is that the teacher grabbed a student by both shoulders, declared that he was delivering god’s wrath, kneed the kid in the testicles, and then yelled at the rest of the class inviting any other students who wanted to to come up and kick the same kid in the balls, too.

This is after hours of this substitute teacher yelling hysterically (and all being recorded and uploaded to the internet by astounded kids) about god and how important it is that they make babies and don’t let kids wind up in foster care with lesbian mothers. It’s just unreal.

And now he’s claiming that it was all staged. But the kid who got kneed in the groin isn’t going along with the story. And if you watch any of the videos it seems fairly clear that the teacher and lawmaker is not acting.

Let’s move one…

Yesterday I linked to the story about the FDA kinda sorta moving forward with possibly making a statement about eventually banning menthol in cigarettes: FDA says it will ban menthol cigarettes and all flavored cigars – The agency has long faced calls to act on menthol cigarettes, which are disproportionately smoked by Black Americans and teens just starting to use tobacco

People have been lobbying the FDA to ban menthol cigarettes for many years. So it is a little irritating that 8 years after officially studying the question, their new major announcement is that they will publish a policy sometime soonish proposing the ban… and begin yet another public comment period.

I am illustrating this section of the post with a picture of a pack of Newport brand menthol cigarettes for a reason. Those used to be my favorites. Yes, until I quit 26 years ago, I not only smoked cigarettes, but I smoked menthols.

You may ask why people have been asking the FDA to ban the menthol cigarettes? Well, the answer is essentially the same if you asked me why, back in the day, I preferred menthols. Menthol is not more dangerous than the ordinary ingredients in tobacco smoke on its own, but want menthol does (besides added a cool tingly taste) is it numbs nerve endings. The reason that one of the more popular brands of menthol cigarettes is named Kool is because that numbing effect and the taste create an illusion that the smoke you are inhaling in these cigarettes is less hot (and therefore less burning) than ordinary cigarettes.

So smoking menthols mean that you are less likely to cough or feel a burning sensation and so forth. Some studies have indicated that people who smoke menthol cigarettes smoke more cigarettes per day than those that don’t, and everyone suspects it’s that numbing/cooling effect the menthol has that leads to that.

There are other studies that show that regular menthol smokers, if they can’t get a menthol cigarette during a particular time period, smoke less. And there are also studies that indicate not being able to get menthols at all would increase the number of people who decide to quite each year by the tens of thousands.

And given how deadly smoking is, that would be a good thing.

But the main reason I wanted to write about this ban is because it’s a great excuse to tell you how I accidentally quit smoking 26 years ago.

That’s write, I didn’t mean to quit smoking (even though I really knew that I should)…

How did that happen, you ask? Well, I got this really, really awful case of bronchitis. My doctor prescribed a seven-day course of the antibiotic Zithromax, and by day five the bronchitis seemed to be letting up, but about three days after the last pill, the bronchitis came back with a vengeance.

So my doctor prescribed a ten-day course of clarithromycin, another antibiotic. After several days on the clarithromycin the worst of the symptoms of the bronchitis let up, but I still had a wheeze in my lungs and shortness of breath. Mostly I just wasn’t keeping myself awake all night coughing. And again, a couple of days after the the last tablet, the symptoms got worse, again.

So, after taking another x-ray and some more tests to confirm that it was a bacterial infection of my bronchial tubes, the doctor prescribed augmentin. Augmentin is a combination of the very old, basic antibiotic amoxicillin, plus clavulanate potassium – which is a substance that neutralizes the most common mechanisms that some drug-resistant bacteria deploy.

After just four days of that ten-day regime, the cough had faded away, the wheezing was almost entirely gone, the shortness of breath was gone, and my fever had dropped down to low-grade. I kept taking the pills until they were gone, but I felt so much better.

And it was around this time, when I still had four or five days of the third antibiotic to go, that I realized I couldn’t find my open pack of cigarettes. I searched and searched. My late husband suggested I just pull a fresh pack out of the carton, or take one of his (except he smoked Marlboro Reds – no menthol, so no thanks).

For whatever reason, I was feeling extra stubborn. I was sure that I had more than half a pack of cigarettes somewhere that I had just smoked from, right? Ray asked, "When did you have your last cigarette?" And I started to say, "Oh, it must have been a couple hours ago? I think…? I was at my desk…"

So I went up to the computer room and started looking more thoroughly around the desk. Back then, I kept a pile mail that needed attending to on the desk. Items were added as they came in, and periodically I’d go through it, pay bills that were coming due, and so forth. Inside the pile, beneath seven days worth of new incoming mail, I found the open pack of cigarettes.

I pulled out a cigarette, put it in my mouth, and reached for the lighter.

And then I thought, "This means it has been seven days since my last cigarette." I had been too busy cough and wheezing and choking and being miserable with the bronchitis for the nicotine craving to rise to the surface. I walked downstairs, told Ray where I had found the pack and what that meant. I put the cigarette back in the pack. "I went seven days without smoking and never even noticed. Let’s see if I can go eight."

For the next couple weeks I said a variant of that to myself each day. "I’ve gone eight days, let’s see if I can do nine," and so on.

Sometime in the mid-twenties I just stopped counting days.

There is a coda to add. For years every time I caught a cold, even a mild head cold, it would turn into bronchitis and I’ve have to take antibiotics. At least three times every winter I’d get bronchitis. It was about three years after I quit smoking before I realized that in all that time, I hadn’t had a cold turn into bronchitis.

This is not to say that I have never had bronchitis again, but now it is, at most every other year or so, and even then, it’s only if I have a severe cold or the flu that goes on for a week or more. So, in case the danger of cancer (and watching a number of my loved ones die of smoking-related illnesses over the years) wasn’t enough reason to quit, I’m happy that I’m not constantly getting that painful choking cough in the middle of the night several times a year.

So, yeah, speaking from personal experience: anything that will help more people quit smoking is a good thing!